Portal Network and Client Diversity

The topic of client diversity within the Ethereum community has been broadly addressed to increase awareness. As of today, there are 4 implementations of an execution layer client and 5 implementations of a consensus layer client being used in production environments. This is because an Ethereum node is made up of two software clients: the execution layer client (EL) and the consensus layer (CL). With this level of diversity in implementations, the Ethereum network is more resilient to potential bugs or issues that may arise in one client implementation. If one client experiences a software bug or issue, the other clients will continue to function as expected, preventing a network-wide failure. However, for this approach to work, it is crucial that no single client has more than 33% of the total network.

As a result, the Ethereum community has been actively working towards promoting client diversity and encouraging users to switch to different clients if they are currently using a client that has a large share of the network. The diversity of clients among Ethereum users is heavily influenced by the choices made when running an Ethereum node. If a particular client is found to be more convenient than others, users are likely to continue using it.

However, what if the barrier for running a client was reduced dramatically in terms of hardware requirements? This is one of the main motivations behind the development of Portal Network. The following article describes how Portal Network can help increase client diversity by enabling lightweight access to Ethereum.

What is Portal Network?

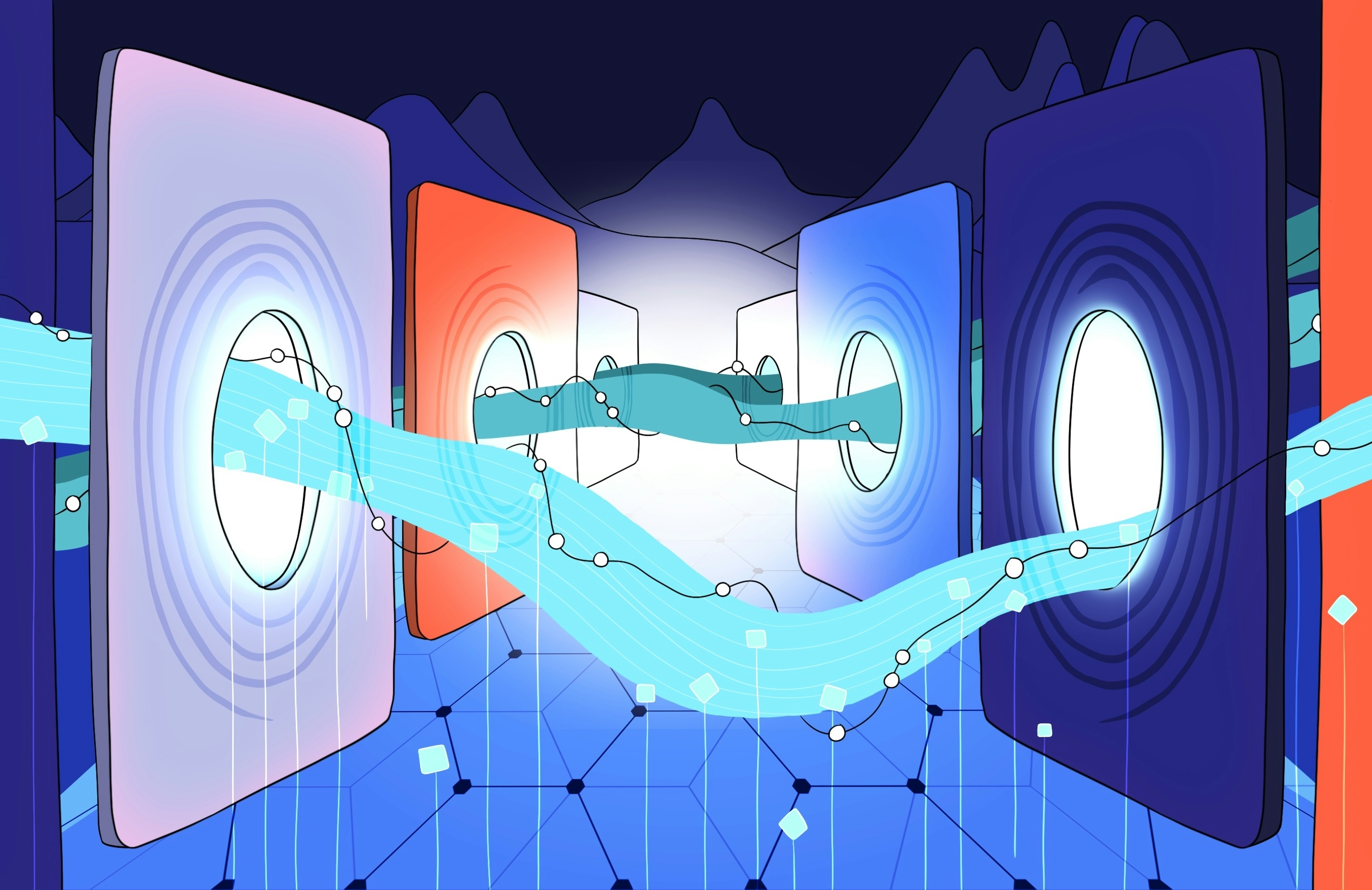

Accessing the Ethereum network by running a full node can be a significant barrier for many users due to the hardware requirements. These requirements are fixed and will only increase over time as state growth is permanent until Ethereum has migrated to statelessness. The Portal Network addresses this issue by giving users high degree of flexibility on how much hardware resources they want to use for accessing Ethereum. It achieves this by having four new networks (or sub-protocols): History Network, Beacon Chain Light Client Network, State Network, and Transaction Gossip Network.

Users can run Portal Network clients on resource-constrained devices to access the Ethereum protocol from any of these four networks. Each network is designed to be independent and offers a different set of functionalities. For example, to check the balance of an address, the user would need to connect to the State Network. The design of each network being separate allows users greater customisation with their participation, meaning if they only wish to check their address balance, there is no need to join networks other then the State Network.

How Portal Network can help Execution Layer Client Diversity

There are various sources available for estimating the levels of client diversity within the execution and consensus layer. However, despite improvements in client diversity in both layers over the recent years, there is still room for improvement in the execution layer.

One possible solution to improve client diversity is by providing users with a high level of flexibility. This can be achieved by eliminating the need for running a full node for the purpose of querying state or historical data, or to submit transactions, thereby reducing costs for users who are currently paying node providers for accessing on-chain data. Over an extended period, this method could help improve client diversity levels, as running a Portal Network client can be more convenient for users to run than a full node.

In the long term, once all four networks are fully functional and optimisations have been implemented for achieving performance levels comparable to RPC providers, it may change the infrastructure landscape for dApps or other protocols that depend on Ethereum. If the Portal client is coupled with EVM execution, it will become possible for users to solely rely on the Portal Network for verifying new transactions, providing easier access to the blockchain for more users.

However, one might be concerned whether Portal Network could be vulnerable to the same issue posed by the lack of client diversity. To address this, there are currently three different official implementations being built, namely Trin (Rust), Fluffy (Nim), and Ultralight (Javascript). Once all four networks are live, users will be able to select from any implementations, further increasing the level of client diversity and making the network more resilient against failures due to software bugs.

Path Towards Long Term Sustainability

For Portal Network to have long term sustainability, there needs to be a large number of nodes running the different implementations, distributed across the network. Based on the current statistics from ethernodes.org, there are an estimated 6,825 nodes running in the execution layer. The total count of these nodes can vary, largely determined by the specific reasons why individuals choose to run a full node. If the purpose is to run a validator, then running a full node is an essential requirement.

The goal with Portal Network is to scale up and support potentially millions of nodes, without having any upper limit to the overall count. In fact, one of the core design objectives behind the development of the Portal Network is to ensure that the overall network benefits as the number of nodes continues to grow. This is because each node has a collective responsibility of only storing a portion of data in their relevant network. With the addition of new nodes, their individual responsibilities can be reduced, as the load of data they handle can be further distributed amongst the expanding network. This capability is not currently available in the execution layer because each node is required to store full the history and state.

In order to achieve such a large node count, it would be necessary to develop a diverse array of tools, each one incorporating a Portal Network client and tailored for a specific purpose.

About Lantern

Pier Two is creating Lantern (C#) with the hope of also supporting the Portal Network. Lantern being built in C# could be beneficial for the Nethermind client as it can be embedded directly into its codebase. This would enable users who are running the Nethermind client to sync with the network by fetching historical data from Portal. This also furthers the development for EIP-4444 which it allows execution layer clients to prune history older than 1 year. Once this is implemented, the Portal Network can be used as a decentralised alternative for allowing new nodes to sync using History Network, and thereby reducing the hardware requirements for running a full node.